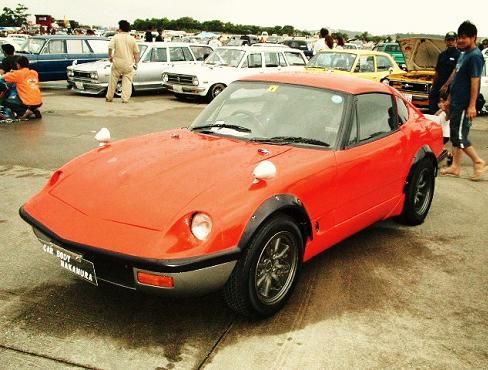

Obviously this isn’t really a Nissan Fairlady Z. What is it? Put your best guess in the comments below and we’ll have to use the no-googling honor system here. Find out on Sunday! UPDATE:

As 2,591 of you already said, it is indeed a Suzuki Cappuccino!

[Image: Susie Blog]

Looks like a Miata.

I believe that is a Eunos Roadster ( Miata in USA) with some funky Z30 body transformation kit. -j

Suzuki Cappuccino?????? Not certain, but I think the A-pillars & roof give it away.

I think this as well.

x3.

Or maybe it’s an Every Joy Pop Turbo Fairlady Z!

x4

It look to be an AVANTI with a G-NOSE conversion or an OPEL GT with a G-NOSE conversion

Judging by the shape of the roof, the 3/4 window and the utterly diminutive size, I vote for a Suzuki Cappuccino.

The wheelbase looks too short to be a Miata/Roadster…

…so I’m going to say that it’s a Suzuki Cappuccino.

Suzuki Cappaccino

Beat me too it 🙁

choro-q!

Well done Nakazoto! Along with the tiny size, the windscreen frame and the 1/4 windows are the giveaway.

Miata with body kit from my memory. The Toyota 2000GT kit on a Miata looks nice too.

Lotus Esprit…me thinks !

Lotus Elan

I think it’s a TVR Vixen. Or some other type of old TVR. But if it is Japanese, then I have no clue. And judging by the fact that all the cars in the picture behind it are Japanese, and that this is JNC, I am probably wrong.

Yeah, the majority are correct, thats a Suzuki Cappuccino turned into a 240Z. Very interesting. The sides are only slightly altered, taking the upper air vent off to leave only one behind each from wheel. The bumper bottom looks slightly Lotus Elan like becuase of the different colour from the rest of the car. The are more conversions like these on the net. I once saw a Dodge Viper Cappuccino!! Man I wish Suzuki would get their finger out and make a follow up to the Cappuccino. They could just call it ‘Expresso’ or what about ‘Cafe Latte’!?

Z body kit on a Suzuki Cappuccino. I own a Cappuccino and had two other so I a recognize the shape and the details like the side windscreen and air intake. I wonder what happened to the removable targa roof?

it’s still there, just hard to see from the pic angle.

Okinawa!

ehhhh – doesn’t do much for me.

Suzuki Cappaccino

Don’t know but I like the Hakosuka wagon in the background!

A Saab Sonnet?

An abomination?

A Porsche 911 turned into a Fairlady Z.. no that would be wrong on so many cant beat them join them levels.

2000GT kit on Miata

http://carscoop.blogspot.com/2007/09/hiroshi-toyota-2000gt-007-replica-based.html

Suzuki Cappuccino, Suzuki Cappuccino, Suzuki Cappuccino, Suzuki Cappuccino!!!!!!!

Suzuki cappuccino it is too small to be a miata.

Suzuki Cappuccino. The fender vent on the side, gives it away.

A Honda Beat?

Suziki Cappucino or NB Miata

cappucino Z , i think- haha, it’s custom

I vote Suzuki Cappuccino

I reckon its a PS30Z copy. But it does look Miata/MX5 based.

definitely a cappuccino. recognise that half-a-side-vent anywhere! too bad i didn’t see this post until 36 other people presumably said ITS A CAPPUCCINO

That shape and shade of orange/red reminds me a lot of a Lotus Elan

http://www.theengineer.co.uk/Pictures/web/u/d/d/Lotus_Elan_Sprint__Classic_Car_Show__400.jpg

Suzuki Cappuccino

its already sunday nd no answer

Opel GT…look at the nose

yep opel GT. and that is not a gnose kit , thats what they look like its even the stock opel bumpers.

all they did was get rid of the roll over head lights

Suzuki Cappuccino with a 240z kit

Opel